使用 AI 和 Amazon Bedrock 革新 A/B 测试

A/B 测试长期以来一直是优化用户体验、改进信息传递和增强转化流程的基石。然而,它传统上对随机分配的依赖通常意味着漫长的测试周期,有时长达数周,仅仅是为了达到统计显著性。这个过程虽然有效,但本质上是缓慢的,并且经常错过隐藏在用户行为中的早期关键信号。

迎来实验的未来:一个使用 Amazon Bedrock、Amazon Elastic Container Service (ECS) 和 Amazon DynamoDB 等尖端服务构建的 AI 驱动的 A/B 测试引擎。这个创新系统通过智能分析用户上下文,在实验过程中做出动态、个性化的变体分配决策,超越了传统方法。结果是什么?噪声降低,更早地识别出重要的行为模式,以及通往自信、数据驱动结论的显著加速路径。本文将探讨构建这样一个引擎的架构和方法,为由无服务器 AWS 服务驱动的可伸缩、自适应和个性化实验提供蓝图。

克服传统 A/B 测试的局限性

传统的 A/B 测试遵循一个简单的原则:将用户随机分配到不同的变体(A 或 B),收集数据,并根据预定义指标宣布获胜者。虽然这是基础性的,但这种方法充满了固有的局限性,可能会阻碍快速优化和深入洞察:

- 纯粹的随机分配: 即使早期数据显示用户偏好或行为存在有意义的差异,传统 A/B 测试仍严格遵循随机分配。这意味着用户可能会长时间接触次优变体,即使另一种变体明显更适合他们的特定画像。

- 收敛缓慢: 必须收集具有统计显著性的大量数据通常意味着实验会持续数周。这种延迟会减缓产品迭代,推迟收入机会,并使组织处于竞争劣势。

- 高噪声水平: 全面随机分配可能会使用户接触到明显不符合其需求或偏好的变体。这种“噪声”可能会掩盖真实洞察,使得更难辨别有效的策略,有时还需要进行大量事后分析以细分数据以求清晰。

- 手动优化负担: 识别细微的行为模式或特定细分市场的偏好通常需要在实验结束后进行大量手动分析。这种被动方法耗时,并且常常无法有效利用实时信号。

考虑一个零售场景:一家公司测试两个号召性用语 (CTA) 按钮:“立即购买”(变体 A)与“立即购买 – 免费送货”(变体 B)。初始数据可能显示变体 B 表现更好。然而,更深入的手动分析可能会揭示高级会员(已经享受免费送货)对变体 B 犹豫不决,而寻求折扣的顾客则趋之若鹜。相反,移动用户可能由于屏幕尺寸更喜欢变体 A。传统方法会在很长一段时间内平均这些不同的行为,使得在没有大量手动细分的情况下难以根据细微的偏好采取行动。这正是 AI 辅助分配变得无价之处,它允许实时适应并带来卓越的 A/B 测试 结果。

使用 AWS 构建自适应 A/B 测试引擎

自适应 A/B 测试 引擎标志着从传统对应物到显著演进。通过整合实时用户上下文和早期行为模式,它能够实现更智能、更动态的变体分配。其核心是,该解决方案利用了 Amazon Bedrock 的智能能力,它不是将每个用户都分配到一个固定的变体,而是评估单个用户上下文,检索历史行为数据,并为该特定交互选择最优变体。

该系统建立在 AWS 内一个强大的无服务器架构之上,确保了可伸缩性、弹性和效率:

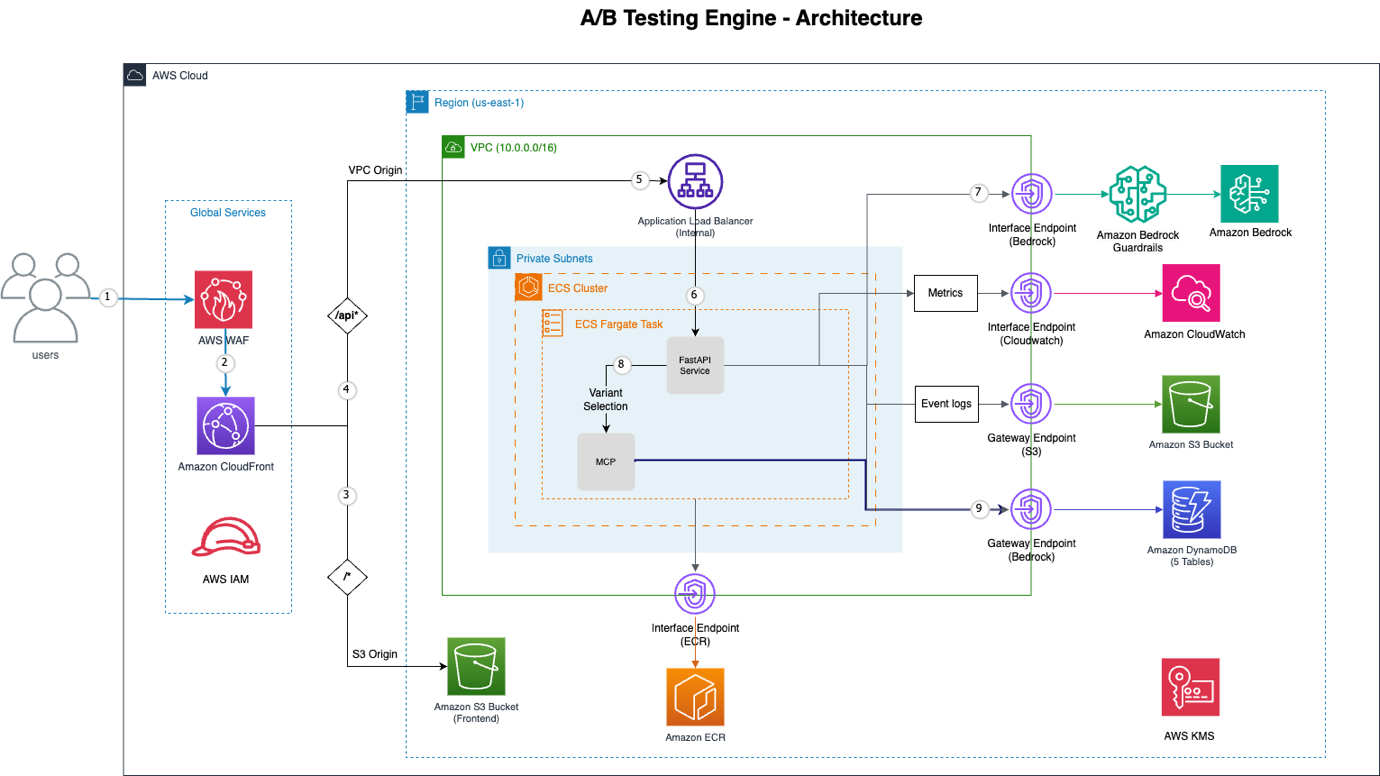

图 1:A/B 测试引擎架构

图 1:A/B 测试引擎架构

以下是实现此功能的关键 AWS 组件的细分:

| AWS 服务 | 功能 |

|---|---|

| Amazon CloudFront | 提供分布式拒绝服务 (DDoS) 保护、SQL 注入防御和速率限制的全球内容分发网络 (CDN)。 |

| AWS WAF | 与 CloudFront 集成的 Web 应用程序防火墙,用于增强安全性。 |

| VPC 源 | 建立从 Amazon CloudFront 到内部应用程序负载均衡器的私有连接,消除了后端服务的公共互联网暴露。 |

| Amazon ECS with AWS Fargate | 运行 FastAPI 应用程序的无服务器容器编排平台,确保高可用性和可伸缩性,无需管理服务器。 |

| Amazon Bedrock | 中央 AI 决策引擎,利用像 Claude Sonnet 这样的模型,通过原生工具使用进行智能变体选择。 |

| 模型上下文协议 (MCP) | 提供对用户行为和实验数据的结构化访问,使 Bedrock 能够高效检索特定信息。 |

| VPC 端点 | 确保与 Bedrock、DynamoDB、S3、ECR 和 CloudWatch 等 AWS 服务的私有连接,增强安全性和降低延迟。 |

| Amazon DynamoDB | 一个完全托管的无服务器 NoSQL 数据库,提供五个表用于实验、事件、分配、用户画像和批处理作业。 |

| Amazon S3 | 用于静态前端托管和事件日志的持久存储,提供高可用性和可伸缩性。 |

这种架构提供了一个强大且自适应的实验平台,使组织能够超越随机分配的局限性,并采用真正智能的 A/B 测试方法。

Amazon Bedrock 在智能变体分配中的作用

这个 A/B 测试 引擎的真正创新之处在于它能够结合多个数据点——用户上下文、历史行为、类似用户的模式和实时性能指标——来选择最有效的变体。这种智能的核心是 Amazon Bedrock,特别是它部署像 Claude Sonnet 这样的高级生成式 AI 模型并结合原生工具使用的能力。这种强大的组合使得系统能够模拟专业的 A/B 测试专家,做出适应个体用户交互的实时、数据驱动的决策。

当用户发起变体请求时,系统不会简单地选择“A”或“B”。相反,它会构建一个全面的提示,为 Amazon Bedrock 提供所有必要信息,以做出明智、最优的决策。这个过程利用了 Bedrock 解释复杂指令和使用预定义工具收集额外上下文的能力,确保 AI 在推荐分配之前掌握全局。为了更深入地了解如何在生产环境中评估此类智能代理,可以参考 评估生产环境中的 AI 代理:Strands Evals 实践指南。

AI 决策提示:语境智能的实际应用

Amazon Bedrock 决策的有效性取决于精心设计的提示结构,该结构为 AI 提供信息。此提示包含两个主要部分:定义 Bedrock 角色和行为的系统提示,以及为决策提供特定实时上下文数据的用户提示。这种设计确保 AI 在明确定义的边界内运行,同时利用丰富、动态的信息。

以下是 Amazon Bedrock 接收的提示结构概念视图:

# System Prompt (defines Amazon Bedrock's role and behavior)

system_prompt =

"""

You are an expert A/B testing optimization specialist with access to tools for gathering user behavior data.

CRITICAL INSTRUCTIONS:

1. ALWAYS call get_user_assignment FIRST to check for existing assignments

2. Only call other tools if you need specific information to make a better decision

3. Call tools based on what information would be valuable for this specific decision

4. If user has existing assignment, keep it unless there's strong evidence (30%+ improvement) to change

5. CRITICAL: Your final response MUST be ONLY valid JSON with no additional text, explanations, or commentary before or after the JSON object

Available tools:

- get_user_assignment: Check existing variant assignment (CALL THIS FIRST)

- get_user_profile: Get user behavioral profile and preferences

- get_similar_users: Find users with similar behavior patterns

- get_experiment_context: Get experiment configuration and performance

- get_session_context: Analyze current session behavior

- get_user_journey: Get user's interaction history

- get_variant_performance: Get variant performance metrics

- analyze_user_behavior: Deep behavioral analysis from event history

- update_user_profile: Update user profile with AI-derived insights

- get_profile_learning_status: Check profile data quality and confidence

- batch_update_profiles: Batch update multiple user profiles

Make intelligent, data-driven decisions. Use the tools you need to gather sufficient context for optimal variant selection.

RESPONSE FORMAT: Return ONLY the JSON object. Do not include any text before or after it."""

# User Prompt (provides specific decision context)

prompt = f"""Select the optimal variant for this user in experiment {experiment_id}.

USER CONTEXT:

- User ID: {user_context.user_id}

- Session ID: {user_context.session_id}

- Device: {user_context.device_type} (Mobile: {bool(user_context.is_mobile)})

- Current Page: {user_context.current_session.current_page}

- Referrer: {user_context.current_session.referrer_type or 'direct'}

- Previous Variants: {user_context.current_session.previous_variants or 'None'}

CONTEXT INSIGHTS:

{analyze_user_context()}

PERSONALIZATION CONTEXT:

- Engagement Score: {profile.engagement_score:.2f}

- Conversion Likelihood: {profile.conversion_likelihood:.2f}

- Interaction Style: {profile.interaction_style}

- Previously Successful Variants: {

这个全面的提示使 Amazon Bedrock 能够充当智能代理,做出细致入微的决策,而不是依赖粗略的随机分配。通过提供对各种数据检索和分析工具的访问,它确保模型拥有所有必要信息,以优化个人用户偏好和实验目标。这种方法显著提高了 A/B 测试 的精确性和速度,从而推动了更有效和个性化的用户体验。这种原生工具使用是一个强大的功能,类似于 Amazon Bedrock AgentCore 中探讨的概念。

开启可伸缩和个性化的实验

AI,特别是通过 Amazon Bedrock 整合到 A/B 测试 方法中,标志着从广泛的随机实验到精确、自适应和个性化交互的关键转变。这种 AI 驱动的引擎不仅缓解了传统方法的局限性——例如收敛缓慢和高噪声——而且还引入了无与伦比的实时优化能力。通过根据个体用户上下文、行为历史和预测洞察动态分配变体,组织可以更快地获得结果,获取更深入的可操作智能,并提供真正定制的用户体验。

由 Amazon ECS Fargate 和 Amazon DynamoDB 等 AWS 服务支持的无服务器架构确保了这种复杂系统保持可伸缩性和成本效益,能够处理各种负载而无需人工干预。这一技术飞跃使公司能够超越仅仅为一般受众识别“获胜”变体,转向理解在任何给定时刻最能引起每个独特用户共鸣的内容。用户体验优化的未来无疑是自适应、智能且由 AI 驱动的,为数字产品和服务的发展设定了新标准。

常见问题

What are the primary limitations of traditional A/B testing methods?

How does an AI-powered A/B testing engine improve upon conventional A/B testing?

Which core AWS services are utilized to build this AI-powered A/B testing engine?

What role does Amazon Bedrock play in the intelligent variant assignment process?

What is the Model Context Protocol (MCP) and its significance in this architecture?

How does the AI decision prompt structure facilitate optimal variant selection?

What are the long-term benefits of implementing AI-powered A/B testing for organizations?

保持更新

将最新AI新闻发送到您的收件箱。