Revolutionizing A/B Testing with AI and Amazon Bedrock

A/B testing has long been the cornerstone of optimizing user experiences, refining messaging, and enhancing conversion flows. Yet, its traditional reliance on random assignment often means lengthy testing cycles, sometimes spanning weeks, just to achieve statistical significance. This process, while effective, is inherently slow and frequently misses early, crucial signals hidden within user behavior.

Enter the future of experimentation: an AI-powered A/B testing engine built using cutting-edge services like Amazon Bedrock, Amazon Elastic Container Service (ECS), and Amazon DynamoDB. This innovative system transcends conventional methods by intelligently analyzing user context to make dynamic, personalized variant assignment decisions during an experiment. The result? Reduced noise, earlier identification of significant behavioral patterns, and a dramatically accelerated path to confident, data-driven conclusions. This article will explore the architecture and methodology behind building such an engine, offering a blueprint for scalable, adaptive, and personalized experimentation powered by serverless AWS services.

Overcoming Traditional A/B Testing Limitations

Traditional A/B testing operates on a straightforward principle: randomly assign users to different variants (A or B), collect data, and declare a winner based on predefined metrics. While foundational, this approach is fraught with inherent limitations that can hinder rapid optimization and deep insights:

- Solely Random Assignment: Even when early data hints at meaningful differences in user preferences or behaviors, traditional A/B testing strictly adheres to random distribution. This means users might be exposed to suboptimal variants for extended periods, even if an alternative clearly performs better for their specific profile.

- Slow Convergence: The necessity to gather a statistically significant volume of data often means experiments drag on for weeks. This delay can slow down product iterations, defer revenue opportunities, and put organizations at a competitive disadvantage.

- High Noise Level: A blanket random assignment can expose users to variants that are clearly misaligned with their needs or preferences. This "noise" can obscure genuine insights, making it harder to discern effective strategies and sometimes requiring extensive post-hoc analysis to segment data for clarity.

- Manual Optimization Burden: Identifying nuanced behavioral patterns or segment-specific preferences typically requires significant manual analysis after the experiment concludes. This reactive approach is time-consuming and often fails to leverage real-time signals effectively.

Consider a retail scenario: a company tests two Call-to-Action (CTA) buttons: "Buy Now" (Variant A) vs. "Buy Now – Free Shipping" (Variant B). Initial data might show Variant B outperforming. However, a deeper, manual analysis could reveal premium members (who already have free shipping) hesitating with Variant B, while deal-seekers flock to it. Mobile users, conversely, might prefer Variant A due to screen size. Traditional methods would average these diverse behaviors over a long period, making it difficult to act on nuanced preferences without extensive, manual segmentation. This is precisely where the power of AI-assisted assignment becomes invaluable, allowing for real-time adaptation and superior A/B testing outcomes.

Architecting an Adaptive A/B Testing Engine with AWS

The adaptive A/B testing engine marks a significant evolution from its traditional counterpart. By integrating real-time user context and early behavioral patterns, it enables smarter, more dynamic variant assignments. At its core, this solution leverages the intelligent capabilities of Amazon Bedrock, which, instead of committing every user to a fixed variant, evaluates individual user context, retrieves historical behavioral data, and selects the most optimal variant for that specific interaction.

The system is built on a robust, serverless architecture within AWS, ensuring scalability, resilience, and efficiency:

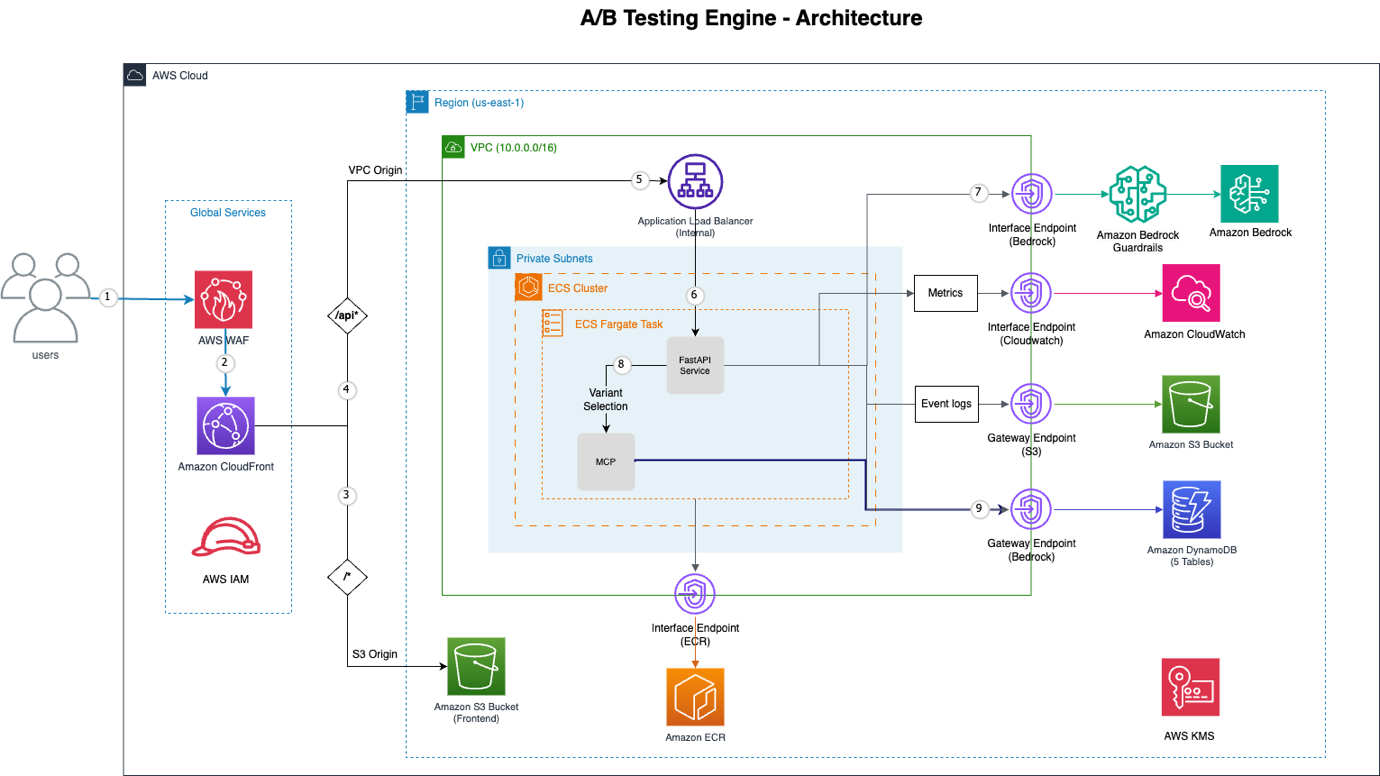

Figure 1: A/B Testing Engine Architecture

Here’s a breakdown of the key AWS components making this possible:

| AWS Service | Functionality |

|---|---|

| Amazon CloudFront | Global Content Delivery Network (CDN) providing distributed denial-of-service (DDoS) protection, SQL injection deterrence, and rate limiting. |

| AWS WAF | Web Application Firewall integrated with CloudFront for enhanced security. |

| VPC Origin | Establishes a private connection from Amazon CloudFront to an internal Application Load Balancer, eliminating public internet exposure for backend services. |

| Amazon ECS with AWS Fargate | Serverless container orchestration platform running the FastAPI application, ensuring high availability and scalability without managing servers. |

| Amazon Bedrock | The central AI decision engine, utilizing models like Claude Sonnet with native tool use for intelligent variant selection. |

| Model Context Protocol (MCP) | Provides structured access to user behavior and experiment data, enabling Bedrock to retrieve specific information efficiently. |

| VPC Endpoints | Ensures private connectivity to AWS services such as Bedrock, DynamoDB, S3, ECR, and CloudWatch, enhancing security and reducing latency. |

| Amazon DynamoDB | A fully managed, serverless NoSQL database providing five tables for experiments, events, assignments, user profiles, and batch jobs. |

| Amazon S3 | Utilized for static frontend hosting and durable storage of event logs, offering high availability and scalability. |

This architecture delivers a powerful and adaptive experimentation platform, enabling organizations to move beyond the limitations of random assignment and embrace a truly intelligent approach to A/B testing.

Amazon Bedrock's Role in Intelligent Variant Assignment

The true innovation of this A/B testing engine lies in its ability to combine multiple data points – user context, historical behavior, patterns from similar users, and real-time performance metrics – to choose the most effective variant. At the heart of this intelligence is Amazon Bedrock, particularly its capabilities for deploying advanced generative AI models like Claude Sonnet with native tool use. This powerful combination allows the system to mimic an expert A/B testing specialist, making real-time, data-driven decisions that adapt to individual user interactions.

When a user initiates a variant request, the system doesn't simply pick 'A' or 'B'. Instead, it constructs a comprehensive prompt that provides Amazon Bedrock with all the necessary information to make an informed, optimal decision. This process leverages Bedrock's ability to interpret complex instructions and utilize predefined tools to gather additional context, ensuring that the AI has the full picture before recommending an assignment. For a deeper understanding of how such intelligent agents are evaluated in production, consider exploring resources like Evaluating AI Agents for Production: A Practical Guide to Strands' Evals.

The AI Decision Prompt: Contextual Intelligence in Action

The effectiveness of Amazon Bedrock's decision-making hinges on the meticulously crafted prompt structure that informs the AI. This prompt comprises two main parts: a system prompt defining Bedrock's role and behavior, and a user prompt providing specific, real-time contextual data for the decision. This design ensures that the AI operates within defined boundaries while leveraging rich, dynamic information.

Here’s a conceptual look at the prompt structure that Amazon Bedrock receives:

# System Prompt (defines Amazon Bedrock's role and behavior)

system_prompt =

"""

You are an expert A/B testing optimization specialist with access to tools for gathering user behavior data.

CRITICAL INSTRUCTIONS:

1. ALWAYS call get_user_assignment FIRST to check for existing assignments

2. Only call other tools if you need specific information to make a better decision

3. Call tools based on what information would be valuable for this specific decision

4. If user has existing assignment, keep it unless there's strong evidence (30%+ improvement) to change

5. CRITICAL: Your final response MUST be ONLY valid JSON with no additional text, explanations, or commentary before or after the JSON object

Available tools:

- get_user_assignment: Check existing variant assignment (CALL THIS FIRST)

- get_user_profile: Get user behavioral profile and preferences

- get_similar_users: Find users with similar behavior patterns

- get_experiment_context: Get experiment configuration and performance

- get_session_context: Analyze current session behavior

- get_user_journey: Get user's interaction history

- get_variant_performance: Get variant performance metrics

- analyze_user_behavior: Deep behavioral analysis from event history

- update_user_profile: Update user profile with AI-derived insights

- get_profile_learning_status: Check profile data quality and confidence

- batch_update_profiles: Batch update multiple user profiles

Make intelligent, data-driven decisions. Use the tools you need to gather sufficient context for optimal variant selection.

RESPONSE FORMAT: Return ONLY the JSON object. Do not include any text before or after it."""

# User Prompt (provides specific decision context)

prompt = f"""Select the optimal variant for this user in experiment {experiment_id}.

USER CONTEXT:

- User ID: {user_context.user_id}

- Session ID: {user_context.session_id}

- Device: {user_context.device_type} (Mobile: {bool(user_context.is_mobile)})

- Current Page: {user_context.current_session.current_page}

- Referrer: {user_context.current_session.referrer_type or 'direct'}

- Previous Variants: {user_context.current_session.previous_variants or 'None'}

CONTEXT INSIGHTS:

{analyze_user_context()}

PERSONALIZATION CONTEXT:

- Engagement Score: {profile.engagement_score:.2f}

- Conversion Likelihood: {profile.conversion_likelihood:.2f}

- Interaction Style: {profile.interaction_style}

- Previously Successful Variants: {

This comprehensive prompt empowers Amazon Bedrock to act as an intelligent agent, making nuanced decisions rather than relying on crude random assignments. By providing access to various tools for data retrieval and analysis, it ensures that the model has all the necessary information to optimize for individual user preferences and experiment goals. This approach significantly enhances the precision and speed of A/B testing, driving more effective and personalized user experiences. Such native tool use is a powerful feature, similar to concepts explored in Amazon Bedrock AgentCore.

Unlocking Scalable & Personalized Experimentation

The integration of AI, particularly through Amazon Bedrock, into A/B testing methodologies marks a pivotal shift from broad, randomized experiments to precise, adaptive, and personalized interactions. This AI-powered engine not only mitigates the limitations of traditional approaches—such as slow convergence and high noise—but also introduces unparalleled capabilities for real-time optimization. By dynamically assigning variants based on individual user context, behavioral history, and predictive insights, organizations can achieve faster results, glean deeper actionable intelligence, and deliver truly tailored user experiences.

The serverless architecture underpinned by AWS services like Amazon ECS Fargate and Amazon DynamoDB ensures that this sophisticated system remains scalable and cost-effective, capable of handling varying loads without manual intervention. This technological leap enables companies to move beyond simply identifying a "winning" variant for a general audience, towards understanding what resonates best with each unique user at any given moment. The future of user experience optimization is undeniably adaptive, intelligent, and powered by AI, setting a new standard for how digital products and services evolve.

Original source

https://aws.amazon.com/blogs/machine-learning/build-an-ai-powered-a-b-testing-engine-using-amazon-bedrock/Frequently Asked Questions

What are the primary limitations of traditional A/B testing methods?

How does an AI-powered A/B testing engine improve upon conventional A/B testing?

Which core AWS services are utilized to build this AI-powered A/B testing engine?

What role does Amazon Bedrock play in the intelligent variant assignment process?

What is the Model Context Protocol (MCP) and its significance in this architecture?

How does the AI decision prompt structure facilitate optimal variant selection?

What are the long-term benefits of implementing AI-powered A/B testing for organizations?

Stay Updated

Get the latest AI news delivered to your inbox.