Revolutionizing Video Search with Multimodal Embeddings

The media and entertainment industry is awash with vast oceans of video content. From archival footage to daily uploads, the sheer volume makes traditional content discovery methods — manual tagging and keyword-based searches — increasingly inefficient and often inaccurate. These legacy approaches struggle to capture the full richness and nuanced context embedded within video, leading to missed opportunities for content reuse, faster production, and enhanced viewer experiences.

Enter the era of multimodal embeddings. AWS is pioneering a solution that transcends these limitations, enabling semantic search capabilities across colossal video datasets. By harnessing the power of Amazon Nova models and Amazon OpenSearch Service, content creators and distributors can move beyond superficial keywords to truly understand and access their media libraries. This innovative approach allows natural language queries to plumb the depths of visual and auditory information, bringing unprecedented precision to content discovery.

Demonstrating this capability at an impressive scale, AWS has processed 792,270 videos from the AWS Open Data Registry, encompassing an astounding 8,480 hours of video content. This ambitious undertaking, which took just 41 hours to process over 30.5 million seconds of video, highlights the scalability and efficiency of this AI-driven approach. The first-year cost, including one-time ingestion and annual OpenSearch Service, was estimated at a highly competitive $23,632 (with OpenSearch Service Reserved Instances) to $27,328 (with on-demand). Such a solution fundamentally transforms how media companies interact with their digital assets, unlocking new avenues for content monetization and production workflows. This paradigm shift towards semantic understanding is a critical development for Enterprise AI in media.

Understanding the Scalable Multimodal AI Data Lake Architecture

At its core, this powerful multimodal video search system is built upon two interconnected workflows: video ingestion and search. These components seamlessly integrate to create an AI data lake that understands and makes searchable the intricate details of video content.

Video Ingestion Pipeline

The ingestion pipeline is engineered for parallel processing and efficiency. It utilizes four Amazon EC2 c7i.48xlarge instances, orchestrating up to 600 parallel workers to achieve a processing rate of 19,400 videos per hour. Videos initially uploaded to Amazon S3 are then processed by the Amazon Nova Multimodal Embeddings asynchronous API. This API intelligently segments videos into optimal 15-second chunks — a balance between capturing significant scene changes and managing the volume of generated embeddings. Each segment is then transformed into a 1024-dimensional embedding, representing its combined audio-visual features. While 3072-dimensional embeddings offer higher fidelity, the 1024-dimensional option provides a 3x storage cost saving with minimal impact on accuracy for this application, making it a pragmatic choice for scale.

To enhance searchability further, Amazon Nova Pro (or the newer, more cost-effective Nova 2 Lite) is employed to generate 10-15 descriptive tags per video from a predefined taxonomy. This dual approach ensures that content is discoverable both through semantic similarity and traditional keyword matching. These embeddings are stored in an OpenSearch k-NN index, optimized for vector similarity search, while the descriptive tags are indexed in a separate text index. This separation allows for flexible and efficient querying. The pipeline manages Bedrock's concurrency limits (30 concurrent jobs per account) through a robust job queue and polling mechanism, ensuring continuous and compliant processing.

Below is a visual representation of this sophisticated ingestion process:

Figure 1: Video ingestion pipeline showing the flow from S3 video storage through Nova Multimodal Embeddings and Nova Pro to dual OpenSearch indexes

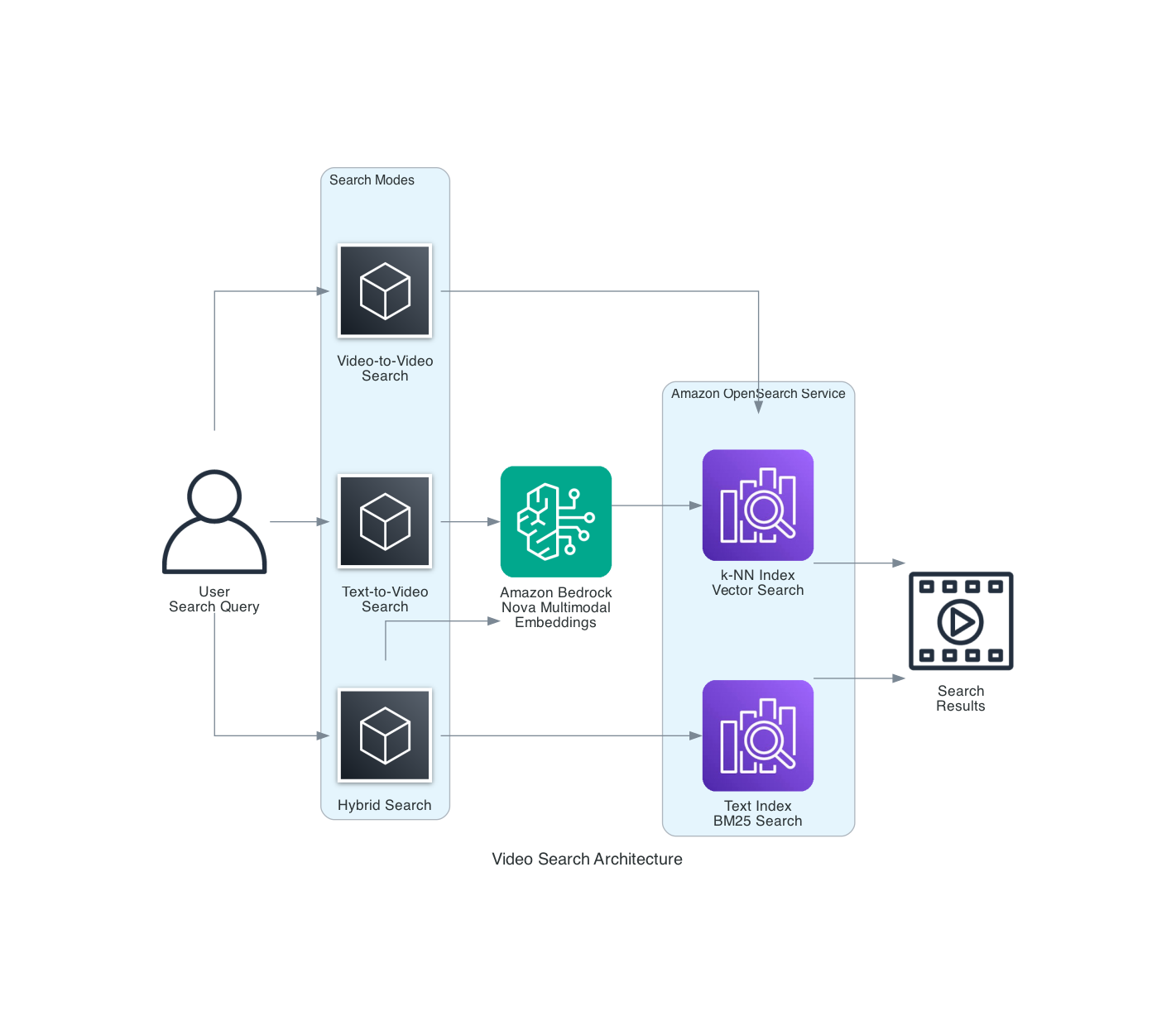

Empowering Diverse Video Search Capabilities

The search architecture is designed for versatility, offering multiple modes of content discovery:

-

Text-to-video Search: Users can input natural language queries, such as "a drone shot of a bustling city at night" or "a close-up of a chef preparing a gourmet meal." The system converts these queries into embeddings, then leverages the OpenSearch k-NN index to find video segments or entire videos that semantically match the description, even if the exact words are not present in any metadata. This is ideal for intuitive content discovery and storyboarding.

-

Video-to-video Search: For scenarios where a user has a video clip and wants to find similar content, this mode excels. By comparing the embeddings of the input video directly with those in the OpenSearch k-NN index, the system can identify visually and audibly analogous content. This is invaluable for identifying B-roll footage, ensuring content consistency, or discovering derivative works.

-

Hybrid Search: Combining the best of both worlds, hybrid search integrates vector similarity with traditional keyword matching. The proposed solution uses a weighted approach (e.g., 70% vector similarity and 30% keyword matching). This ensures high accuracy and relevance, allowing specific metadata to guide search while semantic understanding provides broad contextual matches. This approach is particularly effective for complex queries that benefit from both precise tags and conceptual understanding.

Figure 2: Video search architecture demonstrating three search modes – text-to-video, video-to-video, and hybrid search combining k-NN and BM25

Cost-Effective Deployment and Prerequisites

Deploying such a sophisticated AI data lake requires careful consideration of infrastructure and costs, which AWS has optimized for efficiency. The total cost for processing the extensive datasets, approximately 8,480 hours of video content, came to an estimated first-year total of $27,328 (with OpenSearch on-demand) or $23,632 (with OpenSearch Service Reserved Instances).

The ingestion breakdown highlights key cost drivers:

- Amazon EC2 compute: $421 (4x c7i.48xlarge spot instances for 41 hours)

- Amazon Bedrock Nova Multimodal Embeddings: $17,096 (30.5M seconds at $0.00056/second batch pricing)

- Nova Pro tagging: $571 (792K videos, approx. 600 tokens/video average)

- Amazon OpenSearch Service: $9,240 (on-demand annual) or $5,544 (Reserved annual)

Prerequisites for Implementation: To replicate or adapt this solution, you will need:

- An AWS account with access to Amazon Bedrock in

us-east-1. - Python 3.9 or later.

- AWS Command Line Interface (AWS CLI) configured with appropriate credentials.

- An Amazon OpenSearch Service domain (r6g.large or larger recommended), version 2.11 or later, with the k-NN plugin enabled.

- An Amazon S3 bucket for video storage and embedding outputs.

- AWS Identity and Access Management (IAM) permissions for Amazon Bedrock, OpenSearch Service, and Amazon S3.

The solution leverages specific AWS services and models:

- Amazon Bedrock with

amazon.nova-2-multimodal-embeddings-v1:0for embeddings. - Amazon Bedrock with

us.amazon.nova-pro-v1:0orus.amazon.nova-2-lite-v1:0for tagging. - Amazon OpenSearch Service 2.11+ with k-NN plugin.

- Amazon S3 for storage.

Implementing the Multimodal Video Search Solution

Getting started with this architecture involves a structured approach to setting up your AWS environment. The first crucial step is establishing the necessary permissions.

Step 1: Create IAM Roles and Policies

You'll need to create an IAM role that grants your application or service the authority to interact with the various AWS components. This role must include permissions to invoke Amazon Bedrock models (for both embedding generation and tagging), write data to OpenSearch indexes, and perform read/write operations on Amazon S3 buckets where your video content and processed outputs reside.

Here's an example of a foundational IAM policy structure:

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"bedrock:InvokeModel",

"bedrock:StartAsyncInvoke",

"bedrock:GetAsyncInvoke",

"bedrock:List"

],

"Resource": "arn:aws:bedrock:us-east-1::foundation-model/amazon.nova-*"

},

{

"Effect": "Allow",

"Action": [

"s3:GetObject",

"s3:PutObject",

"s3:ListBucket"

],

"Resource": [

"arn:aws:s3:::your-video-bucket/*",

"arn:aws:s3:::your-video-bucket"

]

},

{

"Effect": "Allow",

"Action": [

"es:ESHttpPost",

"es:ESHttpPut",

"es:ESHttpDelete",

"es:ESHttpGet"

],

"Resource": "arn:aws:es:us-east-1:*:domain/your-opensearch-domain/*"

}

]

}

This policy grants specific permissions essential for the pipeline's operation. Remember to replace placeholders like your-video-bucket and your-opensearch-domain with your actual resource names. Following the IAM setup, you would proceed with configuring your S3 buckets, setting up your OpenSearch Service domain with k-NN enabled, and developing the orchestration logic that leverages the Bedrock APIs for ingestion. This robust framework ensures that media and entertainment companies can efficiently manage, discover, and monetize their ever-growing content libraries, marking a significant leap in content intelligence. This comprehensive solution is an example of how modern AI capabilities, particularly in multimodal understanding, are redefining industry standards for content management and accessibility. It's a testament to the power of integrating advanced AI models with scalable cloud infrastructure to solve real-world Enterprise AI challenges, fostering advancements similar to those seen in Agentic AI workflows.

Original source

https://aws.amazon.com/blogs/machine-learning/multimodal-embeddings-at-scale-ai-data-lake-for-media-and-entertainment-workloads/Frequently Asked Questions

What is a multimodal AI data lake for media and entertainment workloads?

How does the video ingestion pipeline handle large-scale datasets?

What types of video search capabilities does this solution enable?

Which AWS services are critical for building this multimodal embedding solution?

What are the cost considerations for deploying such a large-scale multimodal video search system?

Why is semantic search using multimodal embeddings superior to traditional keyword search for video content?

How does the Amazon Nova family of models contribute to this solution?

What are the benefits of using OpenSearch Service's k-NN index in this architecture?

Stay Updated

Get the latest AI news delivered to your inbox.